Nvidia’s recent decision to open source the KAI Scheduler marks a significant milestone in the interplay between enterprise-grade AI solutions and the open-source ecosystem. By releasing this cutting-edge tool as part of the Run:ai platform under the Apache 2.0 license, Nvidia not only emphasizes its commitment to innovation but also lays the groundwork for a thriving collaborative community around AI infrastructure. This initiative embodies a forward-thinking approach to tech development, fostering reliability and flexibility in systems that have become essential in today’s data-driven world.

As the demands for AI capabilities accelerate, establishing a foundation where developers and researchers can add to an accessible codebase is vital. Nvidia’s Ronen Dar and Ekin Karabulut have elaborated on the KAI Scheduler’s features, presenting a well-rounded examination of its purpose and functionality. With its Kubernetes-native design, the scheduler can seamlessly integrate into existing IT structures, enhancing the way organizations handle machine learning (ML) and AI workloads.

The Challenges of Managing AI Workloads

The complexity of managing Graphical Processing Unit (GPU) and Central Processing Unit (CPU) resources has long posed a substantial challenge for IT professionals. Traditional resource schedulers often falter when faced with the unpredictable nature of AI workloads—fluctuating demands can create significant bottlenecks, leading to downtime and inefficiencies. As organizations scale their AI endeavors, reliance on outdated scheduling methods simply cannot keep up with the pace required.

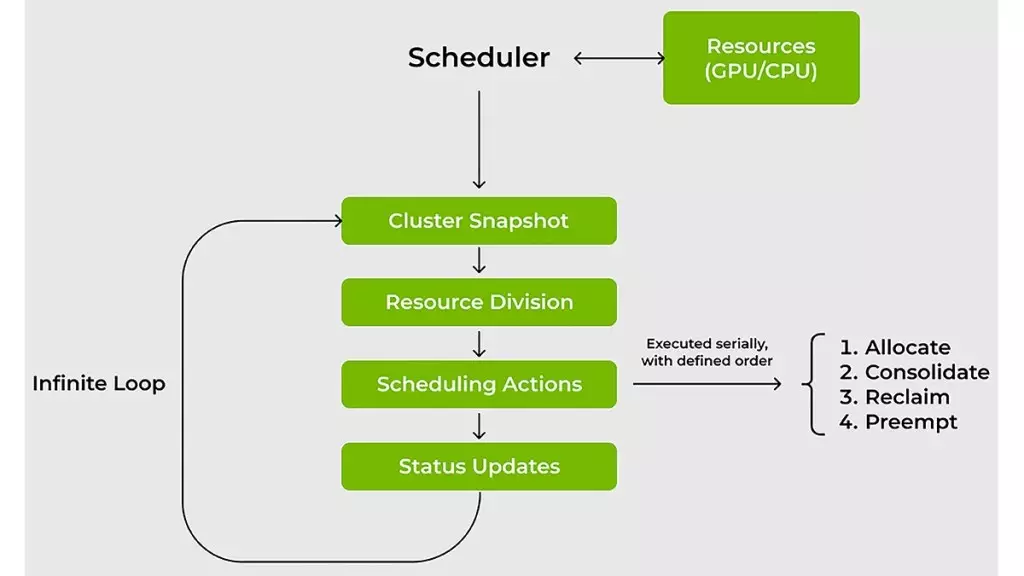

The introduction of the KAI Scheduler offers a refreshing solution to these long-standing issues by addressing critical pain points such as fluctuating GPU demands, resource allocation guarantees, and enhanced connectivity between various AI tools and frameworks. The scheduler dynamically recalibrates resource allocation in real time, efficiently adapting to current workload requirements, while minimizing the need for constant manual adjustments by system administrators.

Innovative Scheduling Techniques

One of the standout features of the KAI Scheduler is its ingenious approach to handling tasks. By combining advanced techniques like gang scheduling, GPU sharing, and hierarchical queuing, the scheduler drastically reduces wait times. This means teams can submit batches of jobs without having to hover over their resources, exponentially increasing time efficiency in machine learning projects.

Moreover, the KAI Scheduler employs effective bin-packing and workload spreading strategies, ensuring efficient resource utilization even amidst fluctuating demands. By mitigating resource fragmentation and facilitating equitable distribution, the scheduler prevents situations where a handful of researchers occupy an unjustified share of GPU resources, while others languish with underutilized capacity.

These capabilities not only streamline operations but also enhance collaboration between teams—ensuring that all members have equitable access to necessary resources and facilitating a more fluid workflow across projects.

Simplifying Integration with AI Frameworks

As the landscape of AI tools continues to expand, the ability to connect different workloads with various AI frameworks poses another significant challenge. Historically, professionals faced an arduous task of managing manual configurations to ensure functionality across disparate tools like Kubeflow, Argo, and Ray. The KAI Scheduler aims to eliminate this complexity through an innovative built-in podgrouper that automatically detects and connects with these frameworks.

This feature accelerates the prototyping process, reducing time-to-market for new AI initiatives and enabling teams to focus their energies on experimentation and deployment rather than on configuration. By simplifying these connections, the KAI Scheduler represents a paradigm shift in how AI teams can work—making it easier to harness the full potential of contemporary AI technologies without getting bogged down by intricate setup processes.

In summation, Nvidia’s KAI Scheduler stands out not merely as another advanced tool in the AI toolkit, but as a transformative solution that embodies the spirit of open-source collaboration and technological advancement. Its design addresses the unique challenges of managing AI workloads while fostering a dynamic and cooperative environment in which innovation can thrive.