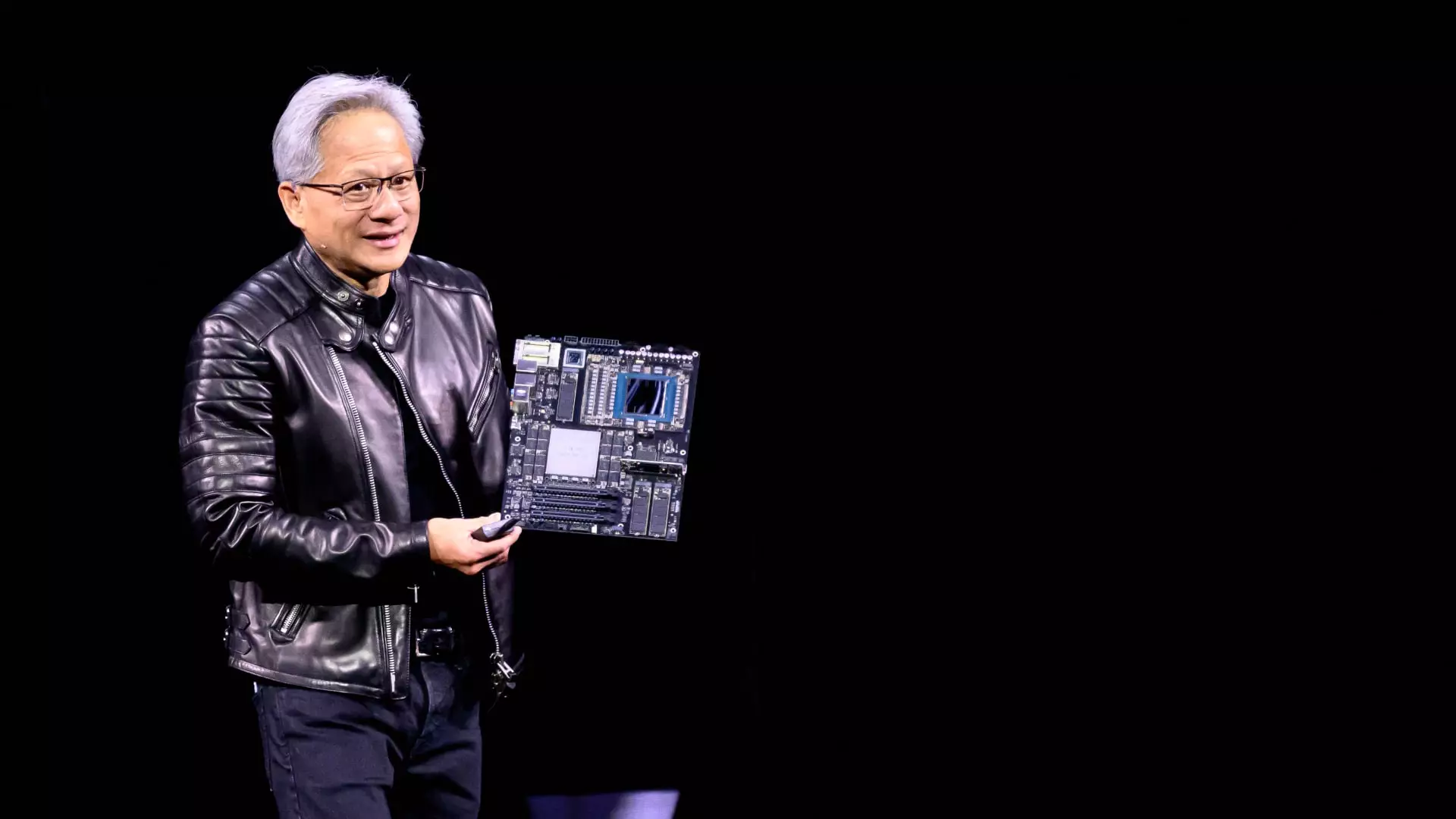

In a world increasingly driven by artificial intelligence (AI) and rapid technological advancement, Nvidia’s CEO Jensen Huang delivered a powerful message at the recent GTC conference: speed is the new currency. The sheer enthusiasm and forward-thinking approach during his two-hour keynote highlighted not just the capabilities of Nvidia’s upcoming GPUs but also the urgency for clients to focus on the fastest chips available. This core philosophy, encapsulated in Huang’s assertion that “speed is the best cost-reduction system,” lays the groundwork for understanding how companies can manage their AI infrastructure effectively in a competitive market.

The Economics of Efficiency

Huang’s engaging manner, which included live calculations concerning the cost-effectiveness of AI processing, emphasized that clients within the hyperscale cloud industry are primarily concerned about return on investment and operational costs. This focus on the economics is astutely positioned against an evolving backdrop of exponential demand for AI capabilities. The Blackwell Ultra systems, poised for release this year, promise extraordinary revenue potential for data centers—up to 50 times more than their predecessors due to superior performance. The implications of this are monumental as companies scramble to keep pace with the emerging needs of AI processing, fundamentally altering how businesses allocate capital.

Moreover, Huang’s transparent approach to discussing costs—demonstrating how each chip’s efficiency works out in terms of cost-per-output—demystifies a traditionally opaque area of investment for potential clientele. As data centers gear up for unprecedented budgets in AI-enhanced architecture, decisions now hinge on immediate accessibility to the latest technological offerings.

The AI Infrastructure Challenge

The race to equip cloud providers—behemoths like Microsoft, Google, Amazon, and Oracle—with cutting-edge GPUs encapsulates the crux of Nvidia’s strategy. While these four giants have reportedly acquired over 3.6 million units of the new Blackwell chips, a significant jump from the previous generation, uncertainties surround their future capital expenditures allocated to such costly assets. Huang’s rhetoric also touched upon the sprawling budget yet to be mobilized, signaling that discussions and decisions regarding a landscape rife with “hundreds of billions” worth of AI infrastructure are already underway.

This projected financial climate feeds into a larger narrative of urgency, underscoring the necessity for Nvidia’s products to meet and surpass the growing demands placed by these companies. Huang posits that cloud providers are not merely participants in this AI era; they are laying foundational stones for an age that will likely be governed by those who can leverage the most powerful AI tools effectively and efficiently.

Custom Chips vs. Versatile GPUs

A significant point of contention arises with the mention of custom chips, often developed by cloud providers. Huang dismissed the viability of these dedicated circuits as a looming threat to Nvidia’s dominance, arguing that their lack of flexibility limits their practicality for the fast-evolving requirements of AI algorithms. His skepticism isn’t unfounded; the tech industry has seen many custom AI chip initiatives falter before reaching the marketplace. Huang’s candid acknowledgment that “a lot of ASICs get canceled” serves to fortify Nvidia’s position as the go-to option for establishments seeking reliable and powerful processing capabilities.

This critical distinction asserts Nvidia not just as a supplier but as a partner in innovation. The comprehensive planning associated with their upcoming roadmap—projects slated for 2027 and 2028—reflects a commitment to remaining at the forefront of AI development, thereby alleviating concerns cloud companies might have regarding long-term support and evolution of their technologies.

Strategic Necessity for Intel and Innovation

Ultimately, Jensen Huang’s determined focus on guiding influential infrastructure projects underscores a pivotal reality: in the burgeoning AI landscape, companies must prioritize the most advanced systems to remain relevant. His compelling questions directed at potential investors—“What do you want for several $100 billion?”—urge stakeholders to reconsider their current approaches and recognize that investing in cutting-edge technology isn’t just an option; it’s imperative for survival and success.

With the stakes higher than ever, Nvidia is not merely setting the pace; it is defining the future. The challenge rests not only on capitalizing on available resources but also on navigating the crucial crossroads between investment, speed, and innovation in an exhilarating and ever-evolving domain.