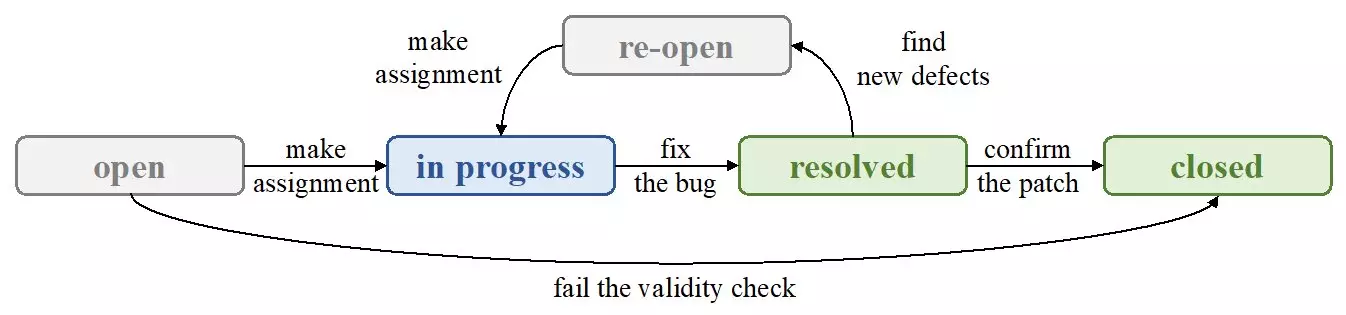

Automatic bug assignment has been a topic of interest in recent years, with engineers relying heavily on textual bug reports to identify and fix bugs. However, the presence of noise in these reports can have unintended consequences for automatic bug assignment systems, particularly due to the limitations of traditional Natural Language Processing (NLP) techniques.

A research team led by Zexuan Li recently conducted a study to explore the effects of textual and nominal features on bug assignment approaches. The team focused on reproducing a well-known NLP technique, TextCNN, to determine if advancements in NLP can lead to better performance when analyzing textual features. Surprisingly, the results of the study showed that textual features did not outperform other types of features, despite the use of a more advanced technique.

The research team delved deeper into the influential features for bug assignment approaches and their findings were quite intriguing. They discovered that the most influential features were actually nominal features that reflected the preferences of developers. Through their experiments, they were able to demonstrate that nominal features could achieve competitive results even without the use of textual information.

Three key questions guided the research efforts of the team. Firstly, they sought to determine the effectiveness of textual features when combined with deep-learning-based NLP techniques. Through their experimentation with TextCNN, they compared the performance of textual features with nominal features and found that the latter were more influential.

Secondly, the team aimed to identify the most influential features for bug assignment approaches and explore why they held such significance. By utilizing the wrapper method and a bidirectional strategy, they were able to ascertain the importance of features based on certain metrics. Their results pointed towards the value of nominal features in reducing the classifier’s search scope.

Lastly, the team evaluated the extent to which the selected influential features could improve bug assignments. By training models with different classifiers on varying groups of features, they tested the performance under popular classifiers such as Decision Tree and SVM. The results indicated that while improved NLP techniques had limited impact, the selected key features achieved notable accuracy improvements ranging from 11% to 25%.

Moving forward, future research in this area could focus on integrating source files to establish a knowledge graph that links influential features with descriptive words. This approach could potentially lead to better embedding of nominal features and further enhance the performance of bug assignment systems.