The rise of artificial intelligence in social media has opened doors to enhanced user interaction, but it also poses significant privacy concerns. Recently, Meta has rolled out a feature that enables AI-driven responses in personal messaging chats across its platforms—Facebook, Instagram, Messenger, and WhatsApp. While this innovation aims to enrich user experience, it raises pressing questions about data privacy, informed consent, and the real utility of such features.

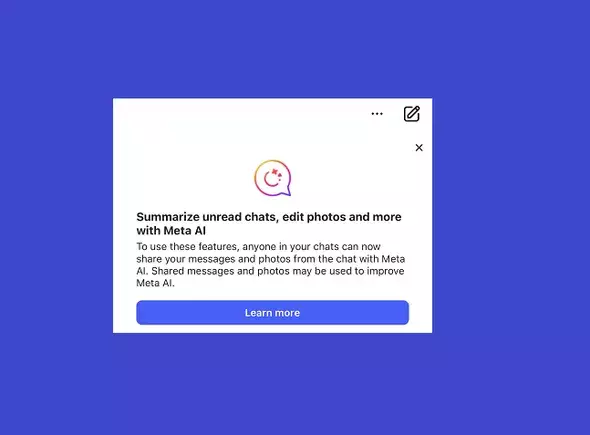

Meta users have begun encountering notifications regarding the integration of AI capabilities into their direct messages (DMs). The company has initiated this rollout ostensibly to enhance communication by allowing the AI to provide quick answers and insights within ongoing conversations. However, the catching aspect of this feature is the implicit agreement that users may not fully grasp upon signing up for the service. According to Meta, while the AI can facilitate conversations, it may also process information shared within those chats for training purposes.

This means that any messages, media, or sensitive data could potentially be included in the training datasets used to improve AI responses. Meta has made an effort to clarify this procedure by encouraging users to be cautious about sharing sensitive information within chats, given the shared nature of this data. That said, the suggestion to be ‘mindful’ about what to share feels more like an afterthought rather than a proactive measure for protecting user privacy.

Engaging with AI within personal conversations may seem innocuous at first glance. However, the implications are far more complex. Meta’s notification underscores that not all information remains confidential, as others in a conversation can share messages with the AI. This interconnectedness of data raises red flags. For instance, if one individual shares sensitive information within a group chat, it stands to reason that another participant could inadvertently contribute that data into the AI’s ecosystem.

The reality is that while Meta makes efforts to anonymize the content fed into its systems, the risk of unintentional exposure remains high. If a user wishes to inquire about a sensitive topic through the AI during a group chat, they must weigh their options carefully, knowing that the information could escape their intended boundaries. In essence, the privacy cautioning feels akin to asking users to tread lightly while knowingly walking through a minefield.

From a utility standpoint, the question arises: is having Meta AI accessible in chats worth the potential risk of data exposure? Many users might argue that regardless of the innovative AI features, the practical benefits do not outweigh the concerns surrounding personal data privacy. The suggestion of maintaining an AI-specific chat to avoid compromising sensitive information only serves to complicate the experience. Is it truly convenient to juggle multiple chat windows just to safeguard one’s privacy?

For those who frequently use DMs for private conversations, the fear of having their conversations analyzed, even with an aim to improve AI functionalities, can be enough to deter them from using these integrated features entirely. The notion of having to enact self-censorship or forego the convenience of integrated AI underscores the ongoing tension between technological advancement and user privacy.

Meta’s pop-up notification is a strategic move to sidestep potential backlash while highlighting the boundaries of user consent. Essentially, users have already consented to broad data usage terms upon signing up, and the latest development does little more than bring this reality into sharper focus. For privacy-conscious individuals, this serves as a wake-up call: remaining vigilant about what is shared in digital spaces is more critical than ever.

Users can opt out of engaging with AI by simply refraining from tagging @MetaAI in chats, yet this avenue feels reductive. Additionally, deleting chats or modifying messages merely provides a band-aid solution rather than addressing the core of the privacy dilemma. Ultimately, individual user responsibility must accompany technological innovation.

As Meta continues to explore AI integrations into its messaging systems, users find themselves navigating an increasingly complex landscape of privacy considerations and technological conveniences. The marriage of AI capabilities with personal communication inherently brings risks and benefits, presenting users with choices that could significantly impact their digital lives. Whether to embrace Meta’s AI tools or to remain cautious highlights a fundamental premise of modern technology: with great convenience comes the need for heightened awareness and responsibility.